Key sitemap generator changes

We’ve been making a number of changes to our online sitemap generator. Some of these will filter down to G-Mapper over the coming weeks. It’s important to understand these recent changes as they impact how we spider your website.

Hosting updates

We recently completed a migration to new hosting within Microsoft Azure to simplify management and deployment of services. We hope that this will reduce down time and deployment errors as well as allow us to scale more easily.

Most of the major changes are now complete and so any minor updates should cause minimal disruption

Canonical urls

Due to issues with how various sites have implemented canonical urls we will no longer automatically redirect to alternate domains as this is causing problems due to misuse / poor implementations on some sites.

Instead we will process the page within the context of the current domain and issue warnings about canonical urls in the logs by default.

If you set the option to obey canonical urls we will assume you are spidering the correct domain and when we see a canonical url:

- If it matches the current page we will include the current page.

- If it is within the current domain we will ignore the current page and content and add the canonical url to the list to be spidered.

- If it is outside of the current domain we will ignore the page and content.

Robots meta and rel nofollow / no index

Some sites end up with no pages or missing pages due to the use of the robots meta tag and rel nofollow attribute which has created negative feedback.

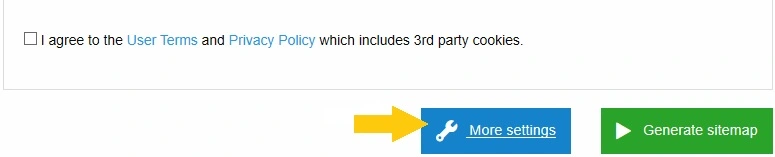

By default we will now ignore these and issue warnings in the logs. If you wish for our spider to obey any robots meta or rel attributes please used the advanced settings.

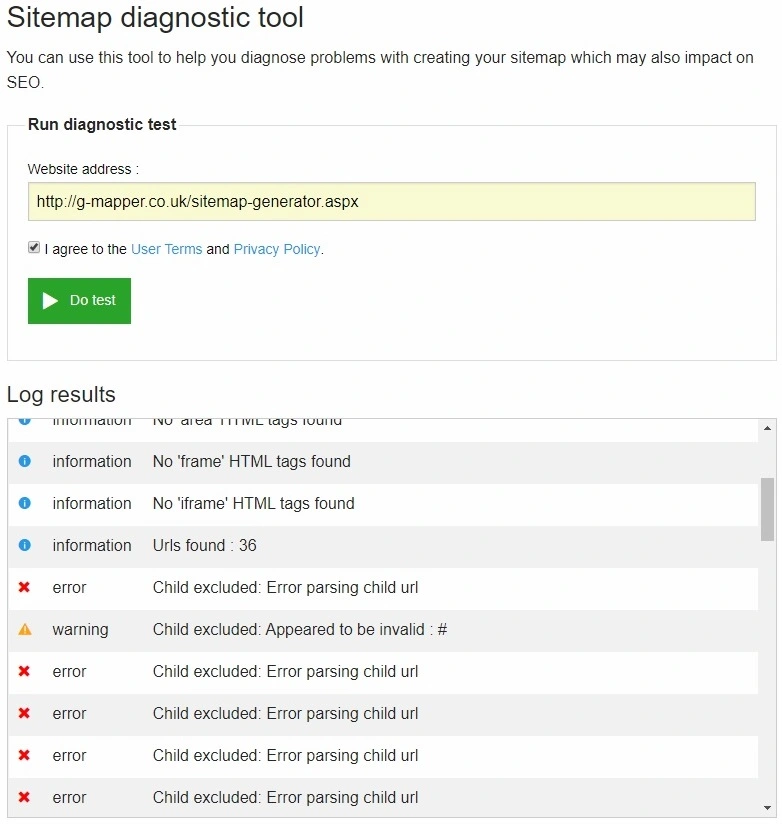

Diagnostic tool

The diagnostic tool has been updated to give a more realistic view of how our spider sees your page. When you use this tool it will now output a verbose log of how it processed your page. These details will also be included in the log which we include in your sitemap download file.

When a spider session returns no results or only one page the log details from homepage will be displayed on the results page. This can often be due to the website blocking our spider.

Other changes

We’ve changed our user agent header to imitate common browsers as some websites we’re issuing a HTTP 403 not authorised error. If your site still blocks us with a 403 we will try to detect this and notify you.

Gzip and deflate compression were not working and causing excessive bandwidth use. We have now resolved this which will improve performance.

This has enabled us to increased the max page size to 300Kb uncompressed as some sites were not finding urls due to their size. This will be notified as a warning as this is exceptionally large for a page.

If your site uses javascript to detect bots and blocks our spider we will try to detect this and notify you. Specifically we’re noticing a number of sites use the “testcookie nginx” module. We are working on a better solution to address this but it required further investigation.

We’ve also been making some general improvements to our spider code as well as some bug fixes to try and make it more reliable and robust, this will hopefully benefit G-Mapper users once it rolls out to our Windows solution.

Support us

Don’t forget we have now updated our service so that you can contribute and get some benefits. Please consider supporting us to help keep the project alive.